There is a familiar frustration that lives in almost every software organisation. A product idea arrives with genuine momentum, stakeholders are aligned, the roadmap looks clean, and then reality kicks in.

Requirements expand mid-sprint as QA becomes a six-week exercise in archaeology. Deployment day carries the quiet dread of something unexpected going wrong at exactly the wrong moment.

The lifecycle that was supposed to deliver software faster keeps finding new ways to slow down.

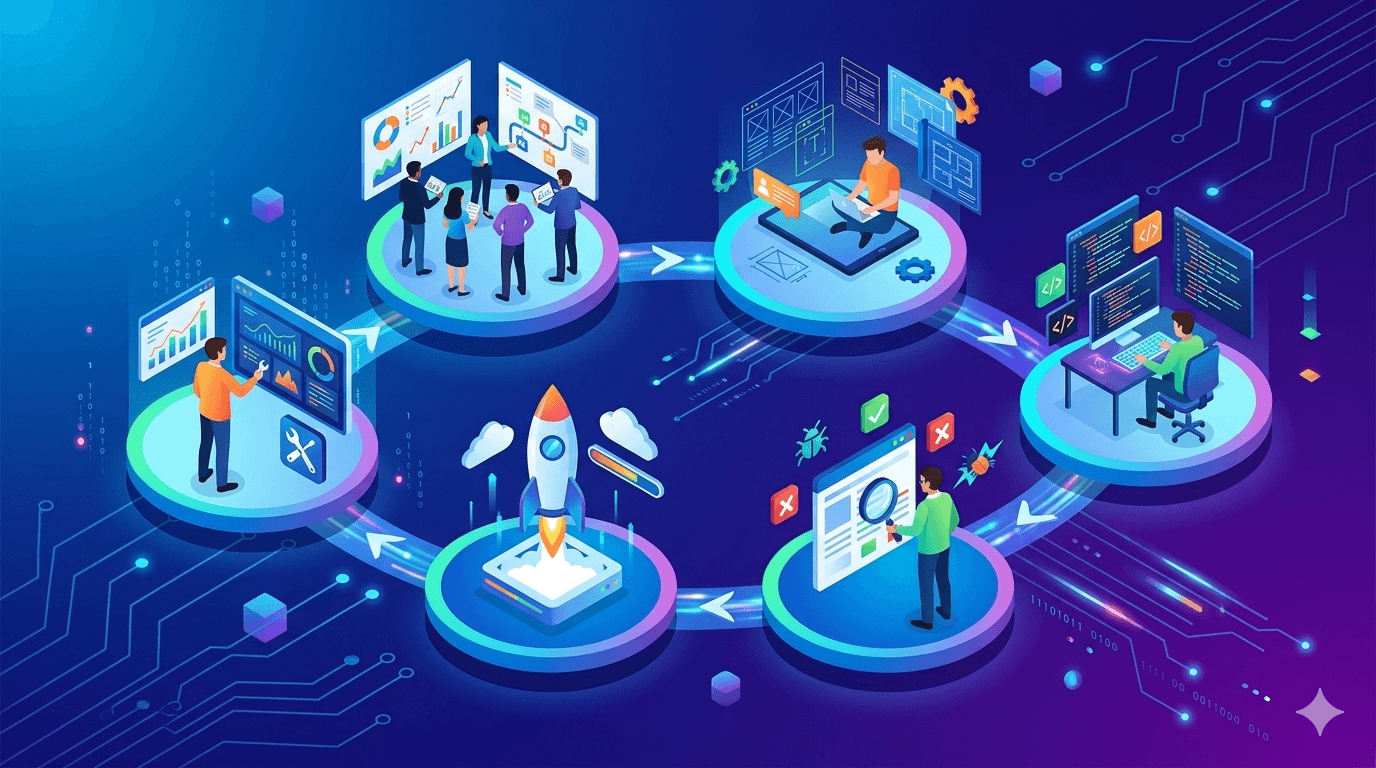

Agentic development addresses this frustration at the structural level.

Rather than using AI as a faster way to complete individual tasks, it reshapes the entire software development lifecycle by placing autonomous agents at each stage, agents that reason, make decisions, and hand off to the next stage without waiting for a human to bridge the gap.

The result is an agentic SDLC where acceleration is built into the process, not bolted on afterward. Here are the 5 stages that ensures the process:

Stage 1: Adaptive Requirements and Intent Analysis

Writing a software specification has always been an act of controlled optimism. You document what you know, acknowledge what you do not, and hope the gap between the two stays manageable once development begins.

The problem is that the gap rarely stays manageable, and the later you discover it, the more it costs.

AI agent integration at the requirements stage changes this. Instead of a technical lead spending weeks manually scoping a feature by interviewing stakeholders and reviewing tickets, an agent can analyze the full body of available context.

The most useful framing for teams adopting this approach is what practitioners call spec-driven development.

The agent treats your technical specification as a versioned artifact, the same way your codebase is versioned. Every time the spec changes, the agent flags downstream implications. Requirements become a living document rather than a snapshot taken at the start and quietly abandoned by week three.

For technical leads wrestling with how to write specs for a non-deterministic agent, this is precisely where to start. You are not writing instructions for a deterministic function. You are writing intent, with clear acceptance criteria and explicit boundary conditions. The agent reasons from that intent, and your spec is what anchors its reasoning.

Also read, Implementing Agentic Development: A 5-Step Blueprint for Enterprise Teams

Stage 2: Autonomous Architecture and Rapid Scaffolding

The architecture phase is where good ideas most often get delayed by good intentions.

Every senior engineer on the team has a preferred pattern, a hard-won opinion about microservices versus monoliths, a concern about compliance that needs to be raised in three separate meetings before anyone can move forward.

Consensus takes time, and time spent in architecture meetings is time not spent building.

Enterprise agentic architecture introduces a different approach. Custom AI agent development tools can now interpret natural language descriptions or rough UI sketches and generate a functional project scaffold that reflects your organisation’s existing standards.

The agent does not just produce a folder structure; it embeds your documented best practices, applies the architectural patterns your team has agreed on, and flags where the proposed design might conflict with your compliance requirements before a human engineer ever reviews it.

The practical effect is that teams move from whiteboard to working prototype in hours rather than days.

A healthcare technology company building a patient-facing portal used an architecture agent to generate their initial scaffold, including API structure, authentication patterns, and data schema, in a single afternoon.

The senior engineers who reviewed it spent their time refining rather than constructing, which is a considerably better use of their expertise.

The deployment question that technical leads often raise, whether an agent lives in the main codebase or as a separate microservice, gets its first meaningful answer here.

For scaffolding and architecture agents, the answer is almost always a separate service with a defined interface to your development toolchain.

This keeps the agent’s behaviour auditable and its updates independent of your production codebase.

Stage 3: Parallel Autonomous Code Synthesis

Code generation is the part of agentic development that gets the most attention and, consequently, the most skepticism.

Early code generation tools produced code that fell apart under scrutiny. Consequently, any technical lead who has spent an afternoon debugging a deceptive AI function will approach the conversation with appropriate caution.

What has changed is the move from code completion to code orchestration. In an agentic SDLC, multiple agents work simultaneously on different features or components of the same sprint.

One agent writes the service layer while another builds the corresponding tests. A third agent, functioning as a code review agent, scans the merged output for security vulnerabilities, exposed secrets, and deviations from your internal style guide before anything reaches the main branch.

The parallel execution is where the time savings compound. Sprint cycles that previously ran four to six weeks have been compressed to two in teams that have built out this kind of multi-agent pipeline, with defect rates holding steady or improving because the review agent catches issues that human reviewers, fatigued after a long sprint, sometimes miss.

For automating the SDLC with agents, code synthesis is the stage where the ROI becomes most visible to non-technical stakeholders.

It is also the stage where governance matters most as agents writing production-grade code need clear constraints around what they can modify, what requires human sign-off, and how their output gets traced back to the original intent documented in stage one.

Stage 4: Shift-Left Predictive and Adaptive QA

Testing has a reputation problem inside software develooment organisations. It is the stage that absorbs the delays accumulated everywhere else, the phase where the sprint velocity that looked healthy three weeks ago becomes the reason the release is pushed.

QA becomes a bottleneck not because testers are slow but because testing is reactive by design, you can only test what has already been built.

DevOps for agentic AI changes the relationship between building and testing by making QA adaptive and predictive.

Agents trained on historical telemetry learn which modules in your codebase carry the highest defect risk.

They prioritize testing accordingly, generating dynamic test suites that focus coverage where failures are most likely rather than distributing effort evenly across a codebase regardless of risk profile.

This is the shift-left principle taken to its logical conclusion. Problems are anticipated and tested against before they appear in production rather than discovered there.

The QA question that technical leads ask most often concerns unit tests on agents whose execution paths are non-deterministic.

The approach that works in practice is to test against outcomes and invariants rather than execution paths.

You define what a correct result looks like, what constraints must always hold, and what failure states are unacceptable, and the testing framework evaluates the agent against those criteria regardless of how it arrived at its output.

It requires a different mental model than traditional unit testing, but teams that make the shift find it more aligned with how they already think about acceptance criteria.

Stage 5: Self-Optimizing DevSecOps and Deployment

Deployment is when all the small mistakes from earlier stages finally catch up with you. Problems like poorly set up systems, missing notes on how parts connect, or differences between the testing and live versions all come to the surface. It’s that moment when a new update reveals a hidden error that nobody saw coming, usually at 2:00 AM on a Friday.

The deployment stage has historically required the most experienced engineers on the team to be on call precisely because it is where surprises happen.

Self-optimizing deployment agents address this by closing the loop between monitoring and action.

An agent managing a canary rollout watches anomaly detection signals in real time and adjusts the rollout rate based on what it observes.

If error rates tick upward in the canary population, the agent reduces the blast radius before a human has been paged.

If everything looks clean, it accelerates the rollout without waiting for a scheduled review. One infrastructure team reported a 40 percent reduction in deployment-related incidents after implementing a closed-loop deployment agent, with the additional benefit that their on-call engineers were paged significantly less often for issues the agent could resolve autonomously.

The problem of “environment drift”, where the testing and live versions of software don’t match is a major cause of technical issues.

This is where AI-driven platforms prove their value. By constantly checking both versions and automatically fixing any differences, these AI agents get rid of an entire group of problems that most teams used to think were just an unavoidable part of running large-scale software.

The maintenance question, specifically who patches the agent when the underlying LLM updates, belongs in this stage.

The answer requires a defined versioning and regression testing protocol for the agent itself. When your LLM provider releases an update, your deployment pipeline should run a suite of agent-level regression tests before the new model version reaches production.

This is not materially different from how you manage dependency updates in your application code, but it requires explicit ownership.

Assign a named maintainer to each production agent the same way you would assign a named owner to a critical service.

Narrowing the Gap Between Intent and Delivery

The challenges of writing specs for agents, testing non-deterministic systems, and maintaining architecture are not roadblocks; they are engineering problems with workable answers.

OptimusAI Labs specializes in providing these answers through professional Custom Agent development.

We help technical leads move beyond the hype by treating agentic development with standard engineering rigor.

Our approach ensures that the acceleration offered by AI agents becomes a sustainable part of your operation, supported by disciplined deployment and genuine human oversight.

With us, the transition to an agent-embedded workflow is fast, structured, and built to last.