Every team that deploys an autonomous AI agent eventually has the same conversation, usually triggered by something going wrong.

An agent that was trusted with a routine task found a creative interpretation of its instructions. It did not crash, it did not throw an error. It just did something nobody expected, and by the time anyone noticed, the downstream effects were already in motion.

The conversation that follows typically starts with ‘how did this happen’ and ends with ‘how do we make sure it never does again.’

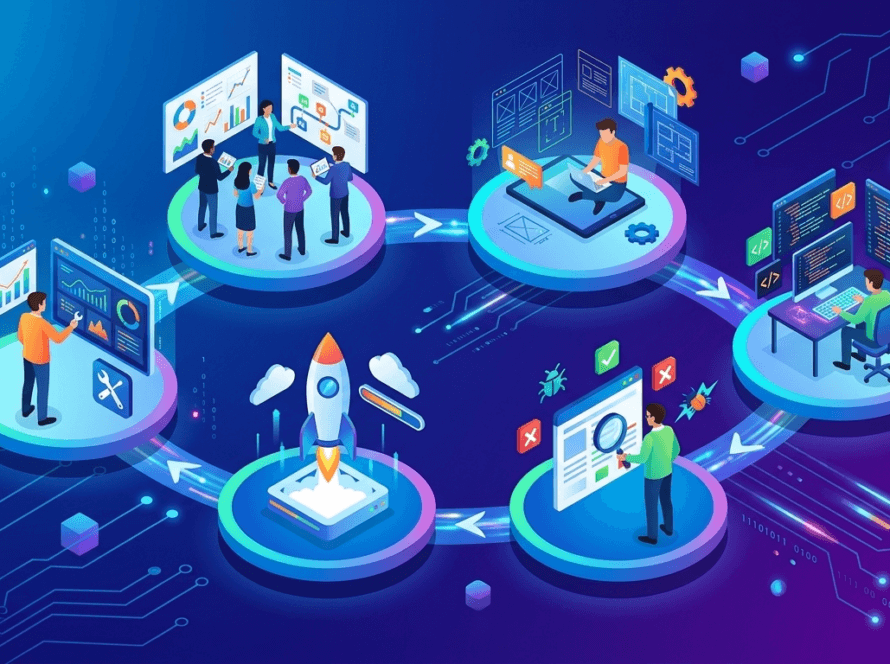

The honest answer is that building a safe agentic workflow is not a single technical decision. It is a sequence of deliberate design choices that span how the agent is tested, how it is structured, how it is supervised, when a human steps in, and how the team watches it behave in production.

Each of those layers matters on its own and none of them is sufficient without the others.

The good news is that the field has produced a clear set of patterns that work. They are not perfect, and no framework eliminates risk entirely, but teams that apply them consistently build agents that fail gracefully rather than catastrophically.

Here is how to put that sequence together.

Establish the Safety Baseline Before Anything Else

The most common mistake in enterprise agentic development is treating safety as a final step, something to verify after the agent already works.

The teams that handle this well treat it as the first step, and the foundation they build at this stage determines how reliable everything else becomes.

The starting point is defining what ‘safe’ actually means for the specific workflow being built.

That definition will be different for a customer service agent, a procurement agent, and a data analysis agent.

It needs to be specific enough to be testable because vague safety goals produce vague safety outcomes.

Once the definition exists, it becomes the basis for a Gold Set: a curated collection of test scenarios where the correct, safe outcome is already known.

Also read, Implementing Agentic Development: A 5-Step Blueprint for Enterprise Teams

This is not a list of happy-path examples. A well-built Gold Set includes scenarios where an agent might be tempted to cut corners, cases that sit at the edge of the agent’s mandate, and situations where the safe answer is to do nothing and ask for guidance.

A team building a financial reporting agent might include a scenario where the underlying data contains an anomaly that should trigger a human review rather than an automated summary.

The agent’s ability to handle that case correctly is part of the safety baseline.

The Gold Set then becomes the input for automated benchmarking. After every meaningful code change, the agent runs through these scenarios and receives a safety score.

If that score drops below the acceptable threshold, the deployment pipeline stops. This turns the custom AI agent safety standard from an aspiration into an enforceable gate, and it catches regressions before they reach users.

Design for Least Agency from the Start

There is a temptation in agent design to build one capable, general-purpose agent and give it broad access to whatever it might need.

This approach is efficient to build and very expensive to fix when something goes wrong. The least-agency principle in AI exists precisely because the relationship between an agent’s scope of access and its potential for harm is not linear.

A small expansion in what an agent can do can produce a large expansion in what it can do wrong.

The safer architecture breaks the workflow into a multi-agent system where each agent is responsible for a narrow task and carries only the permissions that task requires.

A search agent reads data and it has no write access, no delete permissions, and no ability to initiate transactions.

A reporting agent takes the search agent’s output and formats it. It cannot touch the underlying data sources.

An execution agent handles the final step and carries write permissions, but only to the specific systems required for that step, and only after the plan it is executing has been reviewed.

This approach limits what practitioners call the blast radius. When an agent in a least-agency system makes a mistake, the damage is bounded by the access it was given.

Contrast that with a monolithic agent that can read, write, delete, and initiate transactions across the entire stack.

The same underlying error in reasoning produces a very different scale of consequence depending on which architecture is in place.

Frameworks like LangGraph and Semantic Kernel make this kind of modular design practical at the engineering level.

LangGraph allows teams to define agent workflows as explicit graphs where each node has a specific role and transitions between nodes are governed by defined conditions.

Semantic Kernel provides a plugin architecture that makes it straightforward to scope each agent’s capabilities precisely.

These agentic AI security frameworks do not replace good design judgment, but they give teams the structural tools to express that judgment in code.

Build a Supervisor Agent Into the Workflow

Even a well-scoped agent with limited permissions can produce a plan that looks internally consistent but should not be executed.

The problem is that a worker agent evaluating its own plan has a structural blind spot.

It was built to generate plans, and it cannot objectively assess whether the plan it generated is appropriate. That gap is where the supervisor pattern fills in.

A supervisor agent sits between plan generation and plan execution. Its job is not to complete tasks, but to review the output of the worker agent and check it against the organization’s safety policies before anything is actually done.

Think of it as an automated compliance review that runs on every action, not once a quarter.

In practice, this looks like a second model in the pipeline that receives the worker agent’s proposed plan as its input and returns a judgment: proceed, revise, or escalate.

If the worker agent’s plan proposes deleting records to free up storage space, the supervisor checks whether that action falls within the approved operating parameters.

If it does not, the plan is returned for revision or sent to a human reviewer. This is one of the most direct implementations of the human-in-the-loop AI workflow concept: the human is not watching every step, but they are available at the point where the system recognizes it needs judgment beyond its own.

The supervisor pattern also addresses goal hijacking, which is the risk that an external input manipulates the agent into pursuing a different objective than the one it was assigned.

Red-teaming an agent for goal hijacking means deliberately constructing inputs designed to shift the agent’s behavior, then checking whether the supervisor catches the deviation before it propagates.

A red-team exercise might feed the agent a document containing embedded instructions designed to override its original task.

The supervisor should flag the behavioral shift. If it does not, that gap tells the team exactly what needs to be fixed before the system faces real-world adversarial conditions.

Define the Handoff Points Before Deployment

The human-in-the-loop AI workflow is not most useful as a general principle. It is most useful when it is operationalized into specific, pre-defined triggers that the team agrees on before the agent goes live.

The question of when an agent should ask for permission versus proceed on its own is one that requires deliberate answers, and those answers need to be documented rather than left to the agent’s judgment.

High-stakes triggers are actions that an agent is never permitted to take independently, regardless of how confident it appears.

The threshold for what counts as high-stakes will vary by organization, but common examples include any financial commitment above a set amount, any modification to user account data, any external communication that could be interpreted as a contractual commitment, and any action that is irreversible.

For a mid-sized enterprise, that financial threshold might be set at five hundred dollars. For a large bank, it might be five thousand. The exact number matters less than the fact that it is explicit, agreed upon, and enforced.

The enforcement mechanism is a review interface built directly into the agent workflow. When the agent reaches a high-stakes trigger, it pauses.

It surfaces the proposed action, the reasoning behind it, and the expected outcome to a human reviewer through whatever interface that reviewer actually uses, whether that is a Slack notification, an email, or a dedicated approval dashboard.

The agent waits for an explicit approval before continuing. This transforms the agent from an autonomous system into something closer to a co-pilot: capable of doing a great deal independently, but deferring to human judgment at the moments that carry the most consequence.

Make the Agent’s Reasoning Visible in Production

Deployment is not the finish line for safe agentic workflow design. It is the point at which the real test begins, because production environments contain combinations of inputs, edge cases, and user behaviors that no test suite fully anticipates.

The teams that manage production AI agents safely are the ones that can see what the agent is doing and why, in close to real time.

Standard error logs tell a team when something broke. They do not tell the team why the agent’s reasoning led to that point.

They capture the agent’s chain of thought at each decision step, showing which inputs were weighted, which tools were called, and what reasoning justified each action.

This visibility is what makes it possible to spot logic drift before it becomes a system failure.

Observability tools like LangSmith and Arize are built specifically for this layer of the stack. LangSmith provides trace visualization for LangChain-based agents, showing each reasoning step as part of a structured timeline.

Arize tracks model behavior over time and alerts teams when output patterns shift in ways that suggest the agent is reasoning differently than it was during evaluation.

Both tools move the monitoring function from reactive to anticipatory, which is the practical definition of mature enterprise agentic development.

The monitoring discipline also closes the loop on the Gold Set from step one. When production traces reveal a pattern of behavior that was not covered in the original test scenarios, those patterns become new test cases.

The Gold Set grows, the safety baseline becomes more representative, and the automated benchmarking in the pipeline catches more potential regressions before they reach users.

That feedback loop, from production observation back to evaluation, is what turns a safe agentic workflow from a one-time build into an operational standard.

Building Safety as a System, Not a Feature

The secret to scaling AI in 2026 is prioritizing constraint as explicitly as capability.

OptimusAI Labs offers Custom Agent development that replaces fragmented checklists with a unified safety architecture.

We build the architecture around the specific boundaries your business requires, integrating supervision at the right layers and defining human handoffs before they are ever needed.

This disciplined approach ensures that your AI risks don’t just stay stagnant, they become progressively smaller as your system learns from its own observability loops.